Why Multi-AI Evaluation Matters: Eliminating Bias in Training Assessment

Single-model AI evaluations introduce systematic bias into training scores. Learn how consensus-based multi-model evaluation delivers fairer, more reliable assessments.

Roleplays Team

If your organization uses AI to evaluate employee training, there is a question you should be asking: how confident are you that a single algorithm is scoring fairly?

Research from Stanford’s Human-Centered AI group has shown that individual language models exhibit measurable stylistic and cultural biases when grading open-ended responses. Relying on one model is like having a single examiner grade every essay in a university — consistent, perhaps, but consistently skewed.

Multi-AI evaluation fixes this by design.

The Problem with Single-Model Evaluation

Every AI model is a product of its training data, its architecture, and the objectives it was optimized for. That means every model has blind spots:

- Verbosity bias — certain models consistently reward longer answers, even when a concise response is objectively better

- Formality preference — models trained on academic text may penalize conversational language, even in sales contexts where warmth matters

- Cultural framing — a model may default to North American business norms, unfairly penalizing approaches effective in Latin American or Asian markets

- Anchoring to phrasing — some models latch onto specific keywords rather than evaluating the substance of a response

For high-stakes training — pharmaceutical compliance, financial regulation, safety procedures — that level of inconsistency is unacceptable.

“If you wouldn’t trust a single human grader to evaluate all your employees, why would you trust a single AI model?”

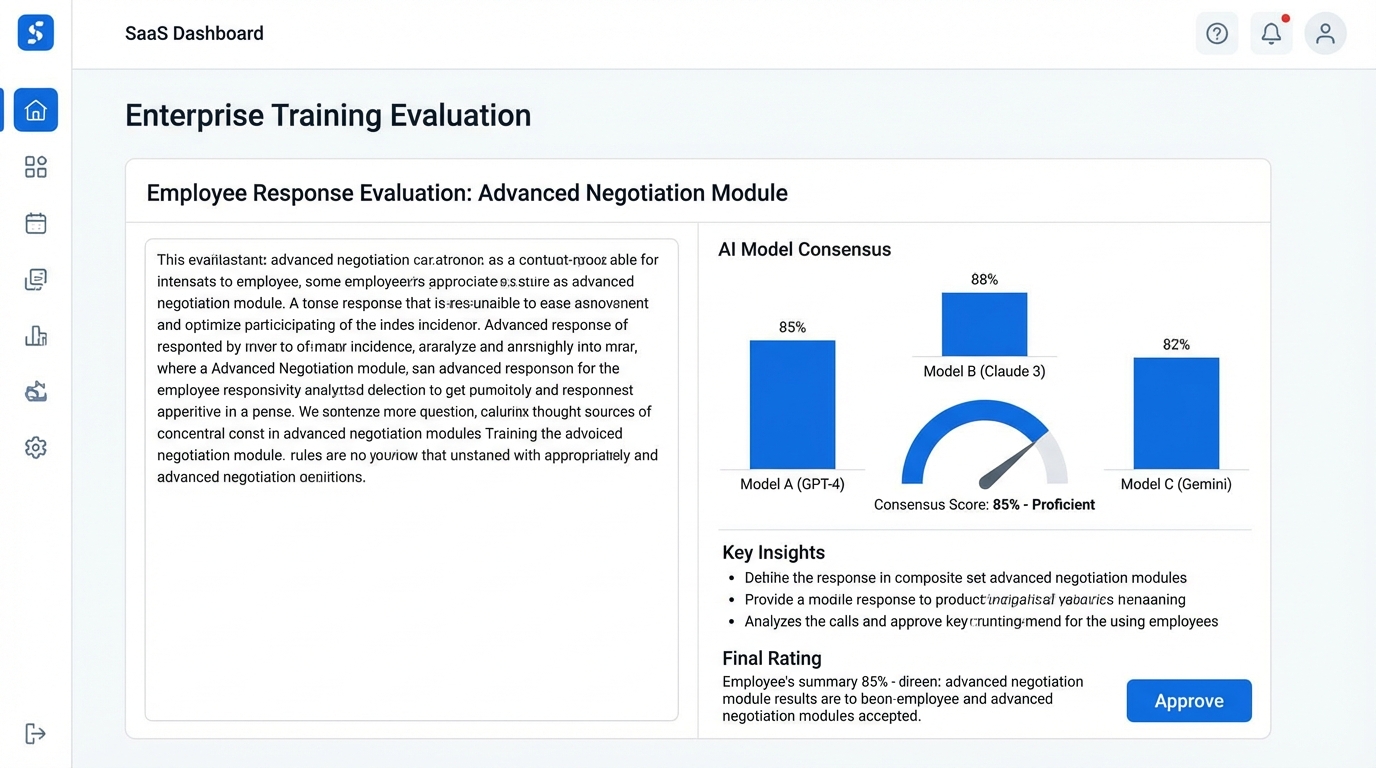

How Consensus-Based Scoring Works

Consensus-based evaluation routes each trainee response through multiple independent AI evaluators. Each model scores the response against the same rubric independently. The final score is derived through aggregation — typically a weighted median that discards outlier scores.

The three-step process

Step 1: Independent evaluation

Each AI model receives the same rubric, scenario context, and trainee response. Models do not see each other’s scores. This ensures true independence — the same principle behind double-blind peer review in academia.

Step 2: Divergence detection

When scores diverge beyond a configurable threshold (e.g., more than 1.5 standard deviations), the system flags the response for review. This catches edge cases where the scenario itself may be ambiguous.

Step 3: Score synthesis

Final scores are computed by aggregating across models, with optional weighting based on each model’s validated accuracy for the specific competency being assessed.

See how multi-AI evaluation works in practice — with real training scenarios.

Explore the evaluation system →Why This Matters for Compliance-Driven Training

In regulated industries, training evaluation is not just a development tool — it is auditable evidence.

When a pharmaceutical company demonstrates GMP compliance to ANVISA, or a financial institution proves suitability training to a regulator, the integrity of the evaluation method matters.

Single-model evaluation introduces a single point of failure. Multi-model consensus gives you:

| Benefit | What it means |

|---|---|

| Statistical robustness | Aggregated scores are demonstrably more stable than individual scores |

| Audit transparency | Show that the same response was evaluated independently by multiple systems |

| Defensible methodology | Consensus scoring aligns with established psychometric principles |

Organizations operating under ISO 17024 or similar standards will find that multi-model evaluation maps cleanly to existing requirements for inter-rater reliability.

Practical Benefits Beyond Bias Reduction

Reducing bias is the headline benefit, but multi-AI evaluation delivers other advantages:

Richer feedback

Different models notice different aspects of a response. One may flag a missed compliance disclosure; another may note appropriate tone but lacking empathy. Synthesizing feedback from multiple evaluators produces more comprehensive coaching.

Calibration over time

By tracking how individual models score relative to the consensus, you can identify when a model is drifting and needs recalibration. This is significantly harder to detect in a single-model setup.

Scenario-specific accuracy

Some models perform better on technical knowledge assessments; others excel at evaluating interpersonal skills. Multi-model systems let you weight evaluators by competency domain.

Getting Started with Multi-Model Evaluation

Transitioning from single-model to multi-model evaluation does not require rebuilding your training program:

-

Define rubrics explicitly — Replace vague criteria like “demonstrates product knowledge” with behavioral specifics: “correctly states the three primary indications and at least one contraindication.”

-

Set divergence thresholds — Decide how much inter-model disagreement triggers human review. For compliance training, tighter thresholds are appropriate.

-

Validate against human benchmarks — Before going live, run a sample through both multi-model evaluation and expert human graders. Measure correlation and adjust weighting.

-

Monitor continuously — Track consensus rates, divergence frequency, and score distributions over time. These metrics are your early warning system.

Conclusion

The era of trusting a single AI to judge training performance is ending. As organizations demand better compliance evidence, fairer evaluation, and actionable feedback — multi-model consensus scoring is becoming the standard.

Roleplays uses multi-AI evaluation by default, ensuring that every simulated conversation is scored fairly, consistently, and with full auditability.

Ready to see how consensus-based evaluation can improve your training outcomes?

Request a demo →Stay in the loop

Get the latest insights on corporate training delivered to your inbox.